Modern software teams operate in environments where change is constant.

New features reach production quickly. Upstream libraries, frameworks, and APIs update on independent schedules. The number of supported devices, OS versions, and browsers continues to grow every year.

These conditions expand the scope of validation required during development. Continuous testing embeds automated checks throughout the pipeline and provides timely feedback about application behavior in different environments.

It helps reduce the volume of unverified work that carries into later stages and strengthens the consistency of each build.

Naturally, as teams mature, their expectations for testing platforms become more defined: predictable results, stable runtimes on both real and virtual devices, support for parallel workloads, and coverage tailored to their user base.

TestGrid’s global telemetry captures these patterns at scale, with millions of automated tests executed across a wide range of configurations.

If you want to understand how they translate into measurable performance, check out the 2025 TestGrid Continuous Testing Benchmark Report.

It charts how continuous testing functions across large, multi-environment test workloads. But before we review the findings, let’s understand how continuous testing typically functions.

- The 2025 Continuous Testing Benchmark Report from TestGrid shows how modern teams manage reliability, speed, and coverage across diverse environments.

- Continuous testing workflows connected directly to CI pipelines help reduce unverified work and improve build consistency.

- Our continuous testing data benchmark analysis shows a 75% average pass rate in 2025, with clear gains linked to tighter test design and earlier validation in the pipeline.

- Execution speed improved year over year, with a 1-minute 48-second average duration, reflecting patterns highlighted in this year’s continuous testing market report.

- Environment coverage continues to expand, with teams testing an average of 7.9 platforms and increasing use of real devices for accuracy.

- AI-assisted debugging is now routine, with 70% of organizations using TestGrid’s AI Failure Analysis for clearer signals and faster investigation.

What Continuous Testing Looks Like in Practice

1. Connected directly to CI pipelines

Automated tests are triggered by each commit, pull request, or merge. Each change produces a clear pass/fail signal that feeds directly into development decisions.

2. Execution on real target environments

Test suites run against combinations of browsers and operating systems for web applications, emulators and simulators for mobile testing, physical devices for real-world validation, and APIs for backend workflows.

This allows you to catch issues that only appear under specific configurations and contribute directly to a broader coverage footprint.

3. A continuous record of behavior over time

Because tests run throughout the development lifecycle, you’re able to maintain a detailed history of execution outcomes. Over time, patterns reveal where:

- Reliability is strong

- Failure clusters appear

- Test suites are slowing down

These observations align with three benchmark indicators: pass rate, execution time, and environment breadth.

What We Learned From the 2025 TestGrid Continuous Testing Benchmark Report

Our continuous testing data benchmark report is based on 7.3 million automated test executions on virtual devices and real devices across 55,800 organizations. Several patterns stood out most strongly in this year’s dataset:

1. Pass rates show steady gains in reliability

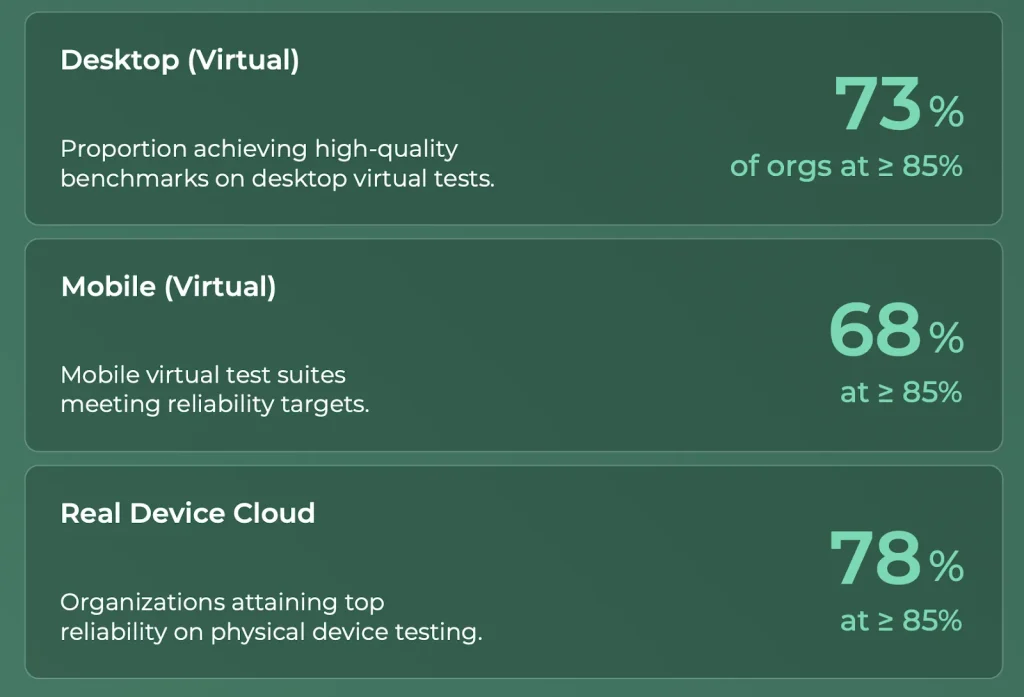

The automated runs show an average pass rate of 75%. This marks a notable improvement from the previous cycle, which was 59% in 2024. Teams that crossed the 85% reliability benchmark tended to follow consistent practices, including:

- Tighter test design

- Earlier checks in their CI pipelines

- Greater use of mechanisms that stabilize UI-dependent tests

On comparing virtual desktop, virtual mobile, and real-device executions, we found that each category followed its own reliability curve, but the overall trend points upward.

2. Execution speeds support fast delivery cycles

This year’s average test duration was 1 minute 48 seconds, a 41% year-over-year improvement. Shorter test cycles equal predictable pipelines, enabling teams to ship changes more frequently. The fastest organizations also shared some similarities, such as:

- Parallel execution

- Cleaner test architecture

- Fewer external dependencies that introduce delays

Also Read: Enterprise Continuous Testing Challenges and How to Overcome Them

3. Coverage reflects the diversity of real user conditions

Organizations exercised 7.9 platform combinations on average, indicating the wide range of environments used in production.

Coverage depth has continued to expand—89% tested on at least five physical devices and 54% tested on 30+ devices. These numbers show how much surface area teams now consider essential when validating apps.

4. Testing behavior varies industry-to-industry

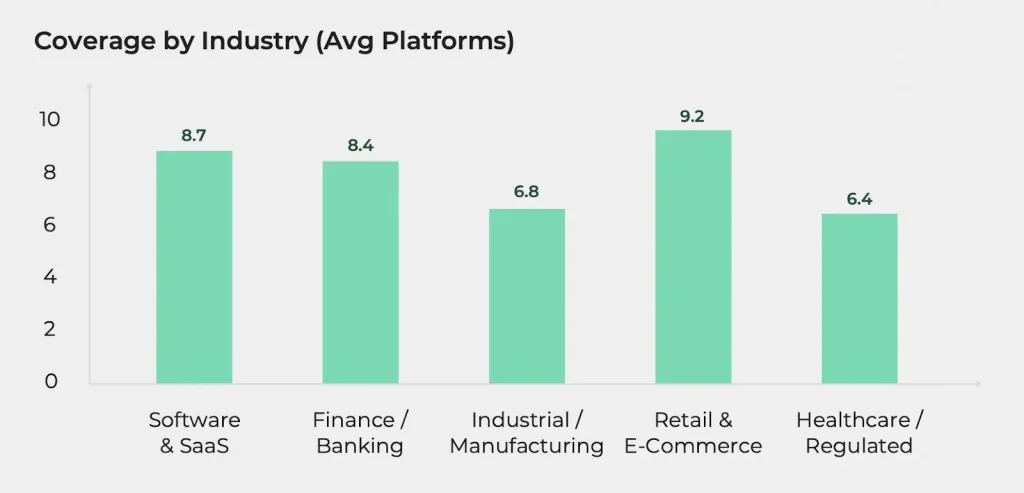

We also saw meaningful differences between sectors, clearly demonstrating how testing priorities evolve when organizations operate under different constraints.

Technology and finance teams, for instance, generally recorded higher pass rates. On the other hand, regulated industries like manufacturing and healthcare maintained wider coverage due to compliance and the need to validate behavior under a wider set of conditions.

In addition, runtime patterns also differed. Industries working with complex or tightly integrated systems, such as healthcare, industrial, and manufacturing, recorded longer execution times.

Sectors with more modern or modular architectures, including retail, eCommerce, and SaaS, achieved shorter runtimes due to streamlined workflows and fewer external dependencies.

5. AI adoption continues to increase among testing teams

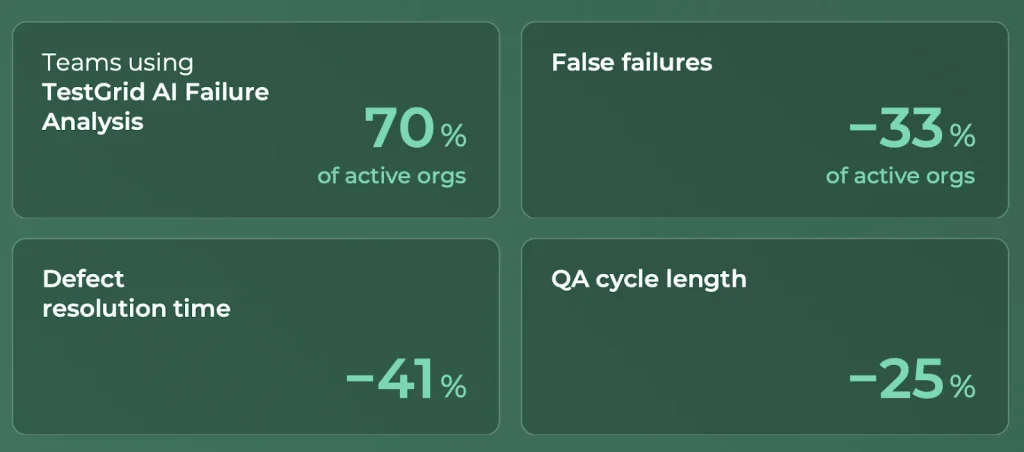

As per our continuous testing market report, 70% of organizations used TestGrid’s AI Failure Analysis to process growing automation workloads.

This feature helped identify unstable tests, group recurring issues, and spot patterns that would otherwise take significant time to isolate manually. Teams using AI reported clearer signal quality, fewer incorrect failures, and shorter resolution cycles.

One thing’s clear: as test suites expand in size and complexity, AI in software testing is becoming a standard part of day-to-day operations. It now functions as a core component of triage and investigation workflows rather than an optional or experimental enhancement.

How This Year’s Continuous Testing Statistics and Insights Can Inform Your Strategy

Many of the signals in this year’s benchmark show how testing habits evolve as systems grow in scale and complexity.

When you examine your own workflows, it can help to look at how your reliability, runtime, and environment coverage have changed over time. Those movements often indicate where attention is needed, especially when certain problem areas appear repeatedly.

We also saw how a smaller group of teams builds momentum more quickly. These organizations make steady progress because they structure their test suites around clear priorities, trim unnecessary steps, and rely on usage data to decide which environments matter most.

They pair virtual environments with real devices when needed, and they treat historical outcomes as part of their decision-making process rather than a record to revisit only when something fails.

AI-supported investigation is becoming another part of this pattern. Teams working with high test volumes increasingly use automated grouping, flaky-test detection, and behavior tracing to shorten the time spent diagnosing failures.

This reduces the amount of rework that accumulates and provides engineers with clearer insight into the source of instability. To study the data behind these findings in greater detail, download the full 2025 Continuous Testing Benchmark Report by TestGrid.