If you work in any of the regulated industries like finance, healthcare, or insurance, you’ll agree that trust isn’t optional; it’s everything. The systems used in these sectors handle mission-critical operations such as:

- Budget planning and financial forecasting

- Claim filing and approval workflows

- Tracking life-saving medical shipments like cancer drugs

In short, these systems carry enormous accountability, and when they fail, the cost of inaccuracy is catastrophic.

For instance:

- Global illicit funds flowing through the financial system are estimated at $3.1 trillion in 2023, with money laundering alone accounting for nearly 4% of global GDP.

- A 2024 HIPAA Journal report revealed that the Change Healthcare breach compromised the data of roughly 190 million people, the largest in healthcare history.

- Nearly 20% of insured U.S. adults have faced claim denials, often due to system or data errors.

On top of this, AI introduces an entirely new layer of complexity during testing.

AI models don’t follow deterministic, step-by-step logic; they operate on probabilities, pattern recognition, and massive datasets. That makes their reasoning opaque, results harder to explain, and failures tougher to trace.

That’s why transparency, accuracy, and auditability aren’t just “nice to have”; they’re the backbone of trustworthy AI testing.

In the sections ahead, we’ll break down what each of these pillars means in practice and how to fortify them in your testing strategy.

Let’s dive in.

The Trust Problem in AI Testing

The core trust problem in AI testing comes from systems that behave correctly but can’t explain why.

In a general sense, testing has always been about ensuring the system works as intended. But AI introduces four unique challenges that make this task harder for testers in regulated environments:

- Opacity of decision-making

Many AI systems, especially deep learning models, operate as “black boxes.”

In finance, healthcare, or insurance, being unable to explain why a claim was denied, a loan was flagged, or a drug discovery was prioritized can undermine regulatory compliance and user trust.

- Data privacy and security

AI systems require large datasets, which increases exposure to breaches, leaks, and misuse.

This is especially concerning under regulations like GDPR (finance) or HIPAA (healthcare).

Example: the 2024 Change Healthcare breach demonstrated how vulnerable large-scale AI data pipelines can be when not properly segmented and tested.

- Bias and fairness risks

AI inherits bias from historical data, and testers must detect and mitigate it.

If unchecked, biased training data can produce unfair outcomes, for instance, certain demographics being more likely to have insurance claims denied or loans delayed.

- Risk of hallucination

Generative AI, in particular, can produce confident yet incorrect answers.

In high-stakes contexts such as medical dosage calculations or fraud detection, this can lead to critical errors and loss of trust.

That’s why addressing transparency, accuracy, and auditability isn’t optional; it’s foundational.

Related read: Hidden Costs of Ignoring AI Testing in Your QA Strategy

How to Strengthen Transparency, Accuracy, and Auditability in Testing for Regulated Industries

To build trustworthy AI systems in regulated sectors, testers must deliberately design for transparency, accuracy, and auditability, not treat them as afterthoughts.

1. Make AI Transparent and Testable

When testing any system, you need to understand how it was trained, how it behaves, and why it produces certain results. That’s the essence of transparency.

Transparency means making model inputs, assumptions, and outputs explainable and reproducible.

Document what data went into the system and what assumptions guided it. For instance, if a claims-processing model was trained mainly on urban hospital data, you’d know its accuracy might decline in rural or mixed settings.

Once you have that in place, design tests that are repeatable and observable.

If you process the same set of claims today and rerun them next month, you should expect consistent outcomes.

This repeatability establishes a stable reference point and allows early detection of performance drift.

Use model-explainability dashboards and traceable logs to interpret why a model made a given decision. In doing so, you’re no longer asking stakeholders to “trust the system,” you’re showing them evidence they can audit.

2. Measure Accuracy Across Real-World Conditions

Accuracy must be validated under real operational conditions, not just in controlled test environments.

Accuracy is often the first metric people think about. In regulated industries, especially, it has three layers that need separate validation:

- Predictive accuracy – How well the model performs on curated test data.

- Operational accuracy – How well it performs in real production environments.

- Fairness accuracy – How consistently it performs across demographic and contextual groups.

However, it isn’t enough to know that a model performs well in a controlled test.

You need to test how accuracy holds up when conditions shift, for example, when data becomes incomplete, noisy, or regionally imbalanced.

Example: a diagnostic AI platform may correctly identify 96% of cancer cases in lab datasets (predictive accuracy) but drop to 82% when used in busy clinics with missing patient information (operational accuracy).

Similarly, an insurance model might show 90% total accuracy but still approve salaried-worker claims 15% more reliably than gig-worker claims (fairness accuracy).

Testing across these layers prevents blind spots that compliance audits will inevitably expose.

3. Build Strong Audit Trails for Accountability

Auditability proves that your testing process is traceable, repeatable, and defensible, qualities every regulator demands.

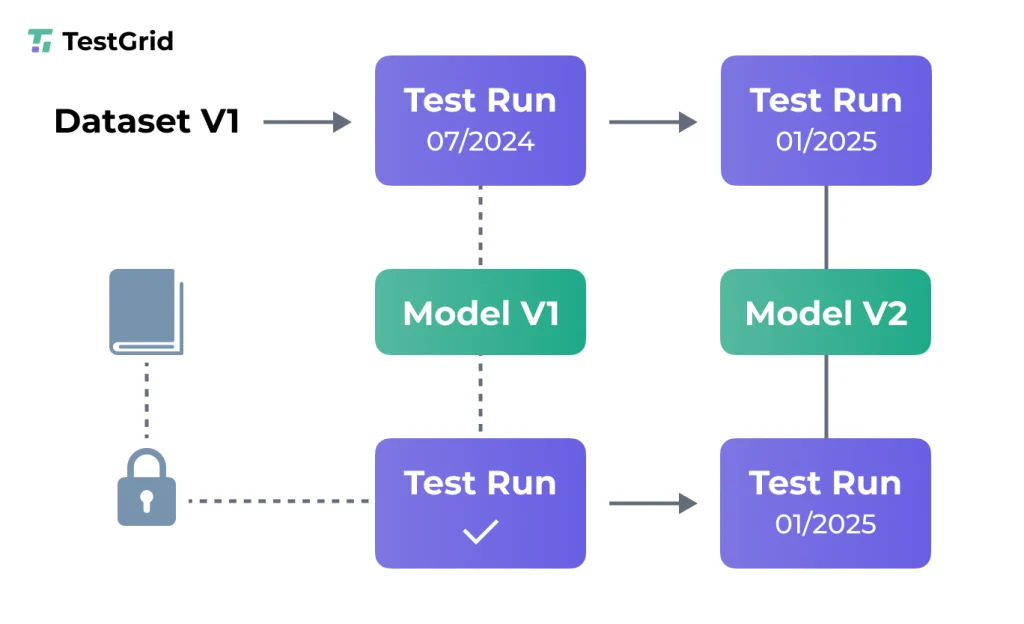

That’s why you should version-control every dataset, model, and configuration.

Example: If a regulator asks how a loan-approval model behaved in 2023, your testing framework should be able to reproduce the exact scenario, parameters, and outputs.

Next, maintain immutable logs of test runs that cannot be altered retroactively. This ensures you can demonstrate data integrity and procedural compliance during external reviews.

Finally, link every model output back to its originating test case and business rule. If a healthcare AI predicts drug interactions, testers should be able to show the clinical guidelines or validation datasets used for that test.

Strong audit trails do more than meet compliance checklists; they create proof of reliability and trust continuity over time.

CoTester: An AI Agent Built for Enterprise Software Testing

The challenges around transparency, accuracy, and auditability are not abstract; they show up daily in QA workflows across regulated industries.

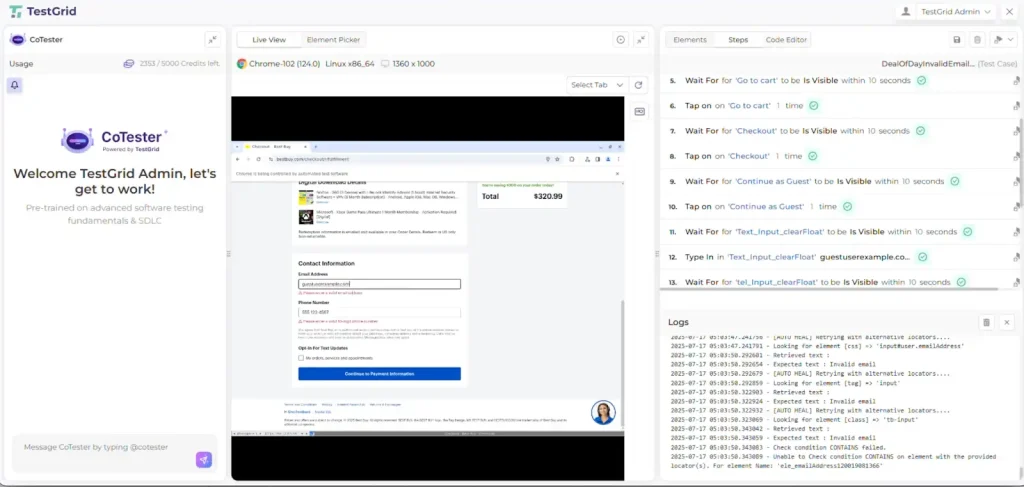

CoTester is TestGrid’s AI-powered testing agent designed specifically to make AI systems transparent, accurate, and auditable at enterprise scale.

Many AI testing tools promise automation, but most fail under real enterprise constraints like compliance requirements, test coverage scalability, and traceability.

That’s the gap CoTester was built to solve.

Think of it as an AI software testing teammate that’s dependable, explainable, and always ready to test alongside you.

Key Features of CoTester:

- Adaptive Learning: Learns your product behavior and generates test cases from functional specs in minutes.

- Human-Like Perception: Understands app visuals, layout, and logic, combining UI, API, and data validation for smarter coverage.

- AgentRx Engine: Auto-heals tests during execution to prevent flakiness while preserving auditability.

- Continuous Oversight: Every decision is logged, versioned, and reviewable for compliance teams.

- Enterprise Deployment: Run securely on private cloud or on-prem, ensuring full data ownership and IP control.

Whether you’re in finance, healthcare, insurance, or eCommerce, CoTester offers trust-level assurance where compliance, precision, and speed must coexist.

CoTester doesn’t replace your QA team; it amplifies their accuracy and transparency with explainable AI. Book a Demo to find out more.

Frequently Asked Questions (FAQs)

What does the road ahead look like for AI testing?

AI testing is shifting from functionality checks to governance validation.

Upcoming standards like the EU AI Act, NIST AI Risk Management Framework, and ISO/IEC AI testing guidelines will formalize how explainability, bias, and reproducibility are audited.

Will independent auditors play a bigger role?

Yes. External AI audits are becoming mandatory under most upcoming compliance regimes.

Independent assessors will review whether your AI testing practices hold up under scrutiny.

For testers, that means more accountability, but also greater recognition as compliance gatekeepers.

How can testers prepare for this future?

Adaptability is key. AI models evolve, data drifts, and new risks emerge. What stays constant is the need for provable trust.

By focusing on visibility, evidence, and reproducibility, testers can help their organizations adopt AI responsibly while meeting regulatory demands.