- What Is a User Story in Testing and Why Does It Matter

- What are the Main Components of a User Story?

- What Should a Good User Story Look Like

- How Does User Story Testing Work?

- How CoTester Helps Align Tests Better with User Stories

- Common Mistakes and Best Practices You Must Know

- What Next?

- Frequently Asked Questions (FAQs)

QA teams often prioritize testing code, functions, and workflows. But how do you verify if a particular feature actually delivers value to your users?

Say you release a new dashboard feature, and all the test cases pass. But when users start interacting with it, you notice the engagement is low or key actions are ignored. This can happen, probably because the feature isn’t helping users achieve their goals.

User story testing assesses your features against relevant user stories, which helps you ensure they don’t just function correctly, but genuinely solve a user problem.

In this blog, we will discuss in detail what user stories for testing are, what constitutes an ideal user story, and how you can convert user stories into structured test cases.

What Is a User Story in Testing and Why Does It Matter

User story testing is a process where test cases are derived directly from user stories and their acceptance criteria. The goal here is to verify if a feature behaves as expected from an end user’s perspective.

User story testing is critical because it helps:

- Improve confidence in your product quality

- Keep your features focused on users

- Confirm features deliver measurable value to users

- Catch gaps between requirements and implementation

- Developers, testers, and business teams collaborate better and ensure that all have the same understanding of the feature

Who should be involved in user story testing

Now that you have an idea of what user stories are in software testing, take a look at the different teams that contribute to it.

| Roles | Responsibility |

| Product owner or business analyst | Define the business intent behind each user story, clarify requirements, and resolve ambiguities; ensure acceptance criteria accurately reflect user needs |

| QA engineers | Translating user stories and acceptance criteria into structured test cases that help you verify functional flows, edge cases, and negative scenarios |

| Developers | Create unit and integration tests that align with user stories; ensure the story is ready for testing and catch defects sooner in the development cycle |

| UX/design | Check if the interactions, layouts, and transitions of your app’s UI meet usability expectations and deliver a smooth user experience |

Also Read: How to Write Effective Test Cases

What are the Main Components of a User Story?

A robust user story serves as the blueprint for both development and quality assurance. To ensure clarity, every story should integrate these three foundational elements:

1. Standard user story template

Every user story must follow the core template: As a [user], I want [goal] so that [benefit].

For example, as a customer, I want to reset my password using email verification so that I can regain access to my account.

This format helps you identify who the user is, what they want to accomplish, and why it matters. This way, you focus more on outcomes rather than features and build test scenarios that provide the intended user value.

2. Acceptance criteria

Acceptance criteria are the conditions that a user story must satisfy to be considered complete or done. They are a set of concise and testable statements that focus on delivering a positive experience to the users.

A well-written set of acceptance criteria aligns product, development, and testing teams on a single shared understanding of “done”. It serves as a primary input for designing test cases.

Let’s look at an example to better understand how the acceptance criteria should ideally be structured.

For this user story – as a customer, I want to reset my password using email verification, so that I can regain access to my account.

The acceptance criteria should be:

- The user receives a password reset link at the registered email address

- The reset link expires after 10 minutes

- The password meets the security requirements

- A confirmation is shown after the password is successfully reset

3. Supporting artifacts

These artifacts provide additional context to fully understand and test a user story and improve test accuracy. Supporting artifacts can include UX mockups, API contracts, mock responses, data models, and non-functional requirements such as performance or security expectations.

What Should a Good User Story Look Like

A good user story must clearly capture who the primary user is, what they need, and why it matters. For this, agile teams use the INVEST principle as a guide to create meaningful user stories.

INVEST is an acronym for:

1. Independent

A user story should be conceptually independent and not reliant on the completion of other user stories. Independent stories are less complex to validate in isolation, help you simplify prioritization, and enable parallel testing. Plus, when stories are not dependent on each other, defects are much easier to trace.

2. Negotiable

The user story should be flexible and open to discussion. You should not treat it as a rigid contract. This encourages collaboration between product owners, developers, and testers to refine details as understanding evolves. This also helps your team clarify assumptions and adjust scope to ensure the story actually reflects user needs.

3. Valuable

The main idea of a user story is to provide benefit to end users or the business. User stories should justify why they exist. If, when writing a user story, you cannot find any statement of value for the feature, then you must consider whether the feature is necessary.

4. Estimable

Your team should be able to reasonably assess the effort required to implement and test the user story. Clear scope, known dependencies, and sufficient context make this possible. If the team cannot estimate the effort required to implement and test a story, it signals missing details or technical uncertainty.

5. Small

User stories should not be long, detailed documents. Smaller stories are easy to design, develop, and test. Ideally, they should be sized to be completed within a single iteration of the development process.

6. Testable

A testable user story is one that has measurable acceptance criteria that define when it’s complete, and enables both functional and non-functional expectations to be verified. Unclear conditions increase ambiguity, defects, and the risk of unmet user expectations.

Also Read: Functional Testing: Types, Examples, Techniques, and Best Practices

Let’s quickly take a look at what a weak and a good user story looks like.

| Weak user story | Strong user story |

| As a user, I want better security | As a registered user, I want to enable multi-factor authentication so that my account remains secure |

In the example above, you can see that the weak user story doesn’t specify who the user is, what kind of security improvement they need, and how ‘success’ will be measured. “Better security” is subjective, and you cannot estimate effort, design acceptance criteria, or test it effectively.

The strong story defines who the user is (registered user), the functionality they are looking for (multi-factor authentication), and the value (account security).

Examples of user stories

1. Project management

User story: As a project manager, I want to track my team’s progress so that I can ensure tasks align with business goals.

- User: Project manager

- Goal: Track team progress

- Benefit: Align tasks with business goals

2. Customer support

User story: As a support agent, I want to view a customer’s interaction history in one place so that I can resolve issues faster.

- User: Support agent

- Goal: Centralize customer interaction data

- Benefit: Faster issue resolution

3. E-commerce

User story: As an online shopper, I want to save items to a wishlist so that I can purchase them later.

- User: Online shopper

- Goal: Bookmark products for future reference

- Benefit: Smoother purchasing experience

How Does User Story Testing Work?

1. Validate requirements against user needs

First, you must review the user story and acceptance criteria to confirm it’s consistent with user personas and flows. This step is critical for identifying gaps, incorrect assumptions, missing scenarios, or unnecessary functionality and ensuring the feature being built actually solves the problem described in the story.

2. Perform tests to verify that features align with the user story and its acceptance criteria

Derive test scenarios based on the user story and its acceptance criteria to assess if the feature or functions behave as expected across different user actions, conditions, and data inputs.

3. Gather feedback from stakeholders and users

Feedback from stakeholders like product owners or business teams helps you evaluate whether the feature meets the business goals. User feedback highlights usability issues or gaps in expectations. This feedback loop guides you in refining user stories, updating acceptance criteria, and improving future testing.

Also Read: Usability Testing: Definition, Types, Process, Cost, Tools & Benefits

4. Drive continuous improvement through iteration

User story testing supports continuous improvement through iteration. Test results, prompt feedback, and evolving user behavior allow you to adjust requirements and optimize test coverage.

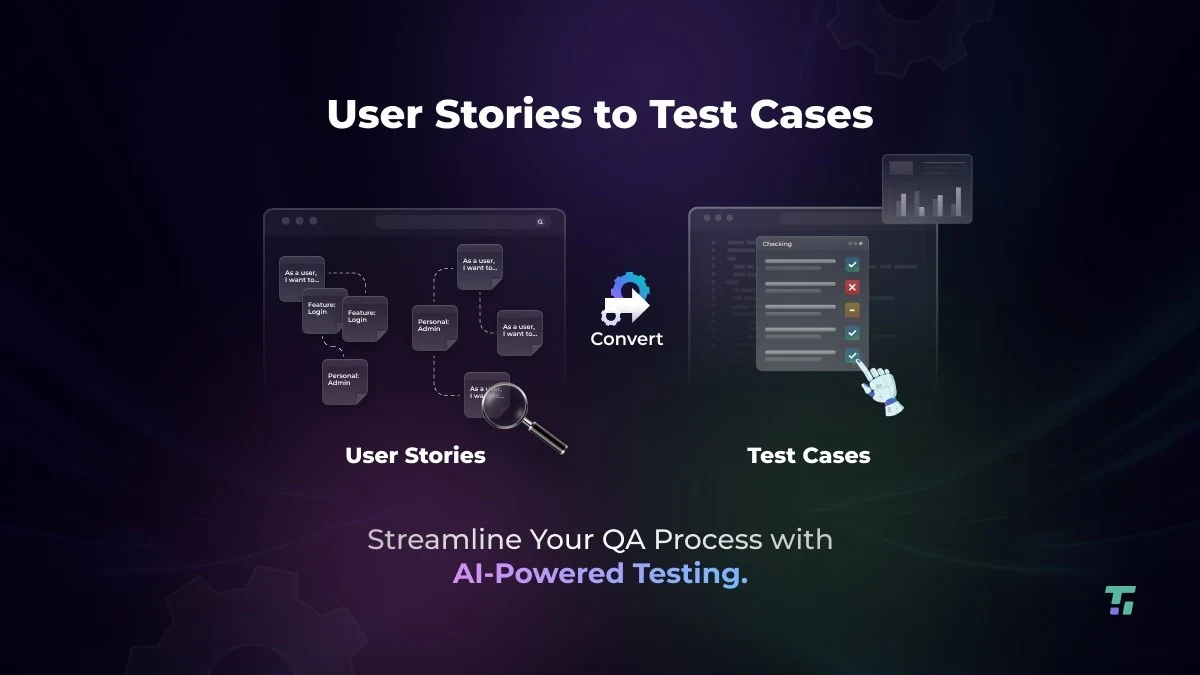

How CoTester Helps Align Tests Better with User Stories

CoTester is an AI agent built for software testing that lets you build tests directly from user stories. You can upload or link your stories from Jira, and CoTester automatically converts specifications into complete, executable test logic, which helps you reduce manual interpretation gaps.

With CoTester, you can design tests that adapt dynamically as your stories, requirements, flows, and UI elements change.

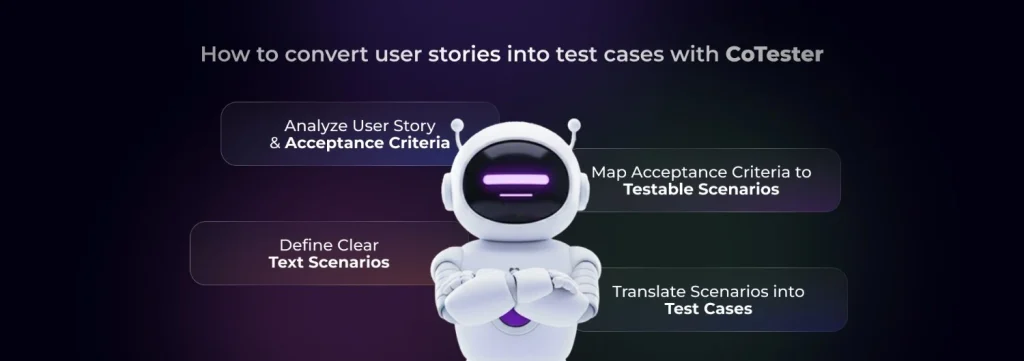

How to convert user stories into test cases with CoTester

1. Analyze the user story and its acceptance criteria thoroughly

The first step is to assess what user stories are in testing so you know who the user is, the action they want to perform, and the expected outcome. Then carefully review the acceptance criteria to understand the conditions under which the user story will be completed.

This step is critical to uncover assumptions and identify the functional and non-functional expectations that you will use to create test cases.

2. Break acceptance criteria into testable scenarios

Divide each criterion into small, testable scenarios. Each scenario must describe a goal, action, and expected result from the user’s perspective. Also, include the positive paths, negative cases, and edge conditions. This will help you confirm the user story captures how the feature will be used in actual user environments.

Know More: Test Environment in Software Testing: What it is, Why it Matters

3. Define Given-When-Then test scenarios

Structure your test scenarios using the Given-When-Then format, clearly describing the flow of a feature. Here’s an example:

- Given the user is on the login page

- When they enter valid credentials and click Login

- Then they should be redirected to the dashboard

You can share your Given-When-Then test scenarios in the form of prompts to CoTester. It understands natural language inputs and automatically generates tests for you.

4. Translate scenarios into test cases

Convert your test scenarios into executable test cases by defining clear test steps, expected results, preconditions, and required test data. Keep each test case focused on a single behavior so the test is precise and repeatable.

With CoTester, you can easily switch to scriptless, record-and-play, or code more for nuanced test case generation.

Learn More: How to Write Test Scenarios That Ensure App Success

Common Mistakes and Best Practices You Must Know

1. Designing vague acceptance criteria

If your acceptance criteria are unclear, your team depends on assumptions to write user stories. And this can increase the risk of missing edge cases and lead to inconsistent testing outcomes. Your testers might find it challenging to determine if the story is actually complete, and developers may implement misaligned functionality.

| Best practice Make sure the acceptance criteria are specific, measurable, and outcome-driven. Outline conditions, expected behavior, and success thresholds so that every stakeholder knows when the user story is completed. |

2. Testing code instead of behavior

Focusing just on testing internal code and not what the user sees or does in the app results in tests that don’t mimic real user interactions. So your tests may pass even though the user journey is incomplete or broken.

| Best practice Test observable user behavior. Establish what the user is trying to achieve before deriving test cases from the user story. This helps you ensure that stories match user intent and real workflows. |

3. Covering only the happy paths

Testing the happy paths will only give you a false sense of confidence in the quality of your user story. Real users often use invalid input, navigate through pages in an unexpected pattern, and encounter errors. Therefore, the user stories must account for this unpredictability.

| Best practice Include the negative and edge cases when you’re writing user stories in testing. Assess how your app behaves when it faces invalid input, boundary conditions, and failure scenarios. This way, you can ensure user journeys stay consistent with actual usage. |

4. No traceability between stories and tests

Maintaining traceability between test cases and user stories helps you assess verified and unverified coverage. Without this traceability, your team might struggle to assess coverage, track quality status, or identify how requirement changes have impacted existing tests.

| Best practice Always try to link test cases, executions, and results directly to the user story ticket, so you get clear visibility into coverage. This also simplifies audit and helps you easily verify if a story meets the acceptance criteria before it’s closed. |

What Next?

As AI-powered software testing platforms mature, we can expect user story testing to become more advanced. Intelligent systems will analyze user stories to identify ambiguities, missing acceptance criteria, and recommend test scenarios that cover unexpected and edge cases.

We may also see AI platforms automatically adapt test cases when user stories update, by analyzing what changed in the requirements and how it affected test scenarios. This shift will help reduce maintenance and keep tests always aligned with real user intent.

And CoTester is built for this future, helping modern testing teams turn user stories into intelligent, self-adapting tests. To experience agentic automation in user story testing, start a Free Trial today.

Frequently Asked Questions (FAQs)

1. What is a user story in software testing?

A user story in software testing represents a requirement expressed in terms of user goals. It guides testers to assess if the requirement satisfies real user behavior and meets the business objectives.

2. What is a user story in manual testing?

A user story manual testing is a short description of a feature stating a task that a user wants to achieve. It guides the testers in creating and executing functional, exploratory, and negative test scenarios to verify if the story meets user intent.

3. How to write an effective user story?

Start by following the INVEST principle, design specific and measurable acceptance criteria, and focus on user behavior to ensure the story is easy to understand, estimate, and test. Make sure it’s written in simple language and clearly defines the user, goal, and value.

4. Who is responsible for user story testing?

User story testing is performed using a collaborative approach where product owners design the acceptance criteria, developers write code to ensure the feature meets the criteria, testers build and execute tests, and UX designers validate if stories translate into usable experiences.

5. What types of tests are created from user stories?

User stories can drive multiple test types, including functional and exploratory tests; unit tests are typically derived from design or code-level specifications.